An Era Of True Fakes – Analysis

By Manohar Parrikar Institute for Defence Studies and Analyses (MP-IDSA)

By Kritika Roy*

Seeing is not always believing, especially so in the Information Age. Information today is being twisted and tweaked to such an extent that segregating facts from fiction would actually need thorough research. Social media has always been ransacked with fake news, via Whatsapp messages or Facebook or Instagram posts. Indeed, fake news has been on the forefront of creating communal divides, enhancing disturbances in sensitive regions, radicalising individuals and tainting reputations.

With increasing advances in artificial Intelligence (AI) technologies and machine learning, a new era of ‘deep fakes’ has emerged. Deep fake technology employs AI based image blending methods to seemingly create real fakes and deceptive videos. This makes differentiating fake from real even more cumbersome and complex. Fake celebrity footages, propaganda videos or revenge porn are all outcomes of the deep fake technology.

Understanding Deep Fakes

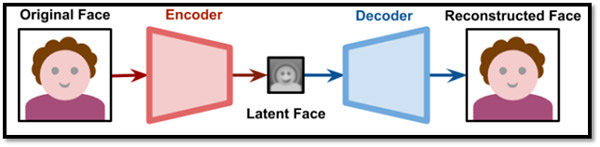

The figure shows a face being sent through an encoder. This encoder is used to create a latent face or a lower dimensional representation of the face. While the latent face will have similar features of the original face, it could be trained for subtle alterations. Training here implies modifying the neural networks (via optimizing its weights) to get desired results. This reconstruction takes place via a decoder. As the programmer has total control over the network’s shape, one can accordingly reconfigure the number of layers, nodes per layer and its interconnectivity. The real mantra of altering image A to B is on the edges of the nodes that connect them. Each edge has some weights, and to find the right weight to ensure that the auto-encoder is able to generate desired results is actually a time consuming process.

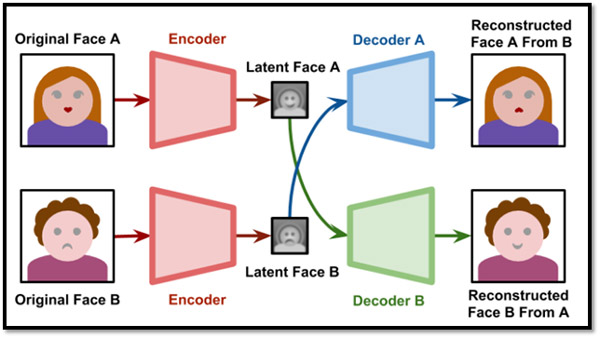

In case of face swap, as shown in figure 2, one can pass the latent face of image A to that of decoder B and then decoder B would try to reconstruct the face with the information relative to the image A. Thus, it will re-create an image akin to that of the original but with modifications so intricate that it would be hard to distinguish.

The Dangers

Deep fakes can be used to target people of interest or general public depending on the ulterior motive. The former may be targeted for public trolling or for gaining cheap revenge while the latter can be targeted for garnering false public support, and to achieve cyber propaganda. The potential threat of deep fakes is that it can be used to heavily influence elections or trigger disinformation campaigns. Deep fakes can create trust issues in an already polarised political environment. These deep fakes could actually be misused by anyone at any point of time.

In a sampler presented by Jordan Peele done in association with Buzzfeed, Jordan ventriloquizes the former president of America, Barack Obama, where Obama could be seen talking about random stuff in the video. It is so real that one could easily be convinced that it is authentic. Now imagine a video surfacing online showing a potential candidate talking gibberish or involved in an unethical activity, days before an election. Such an instance would not only take a hit on his supporters but also lead to further confusion and clashes. In fact, many fake videos can be found online of prominent personalities, leaders, many actors and actresses. These days, free applications with user friendly means to swap faces or create fake videos are available online like FakeApp (an easy to use platform for making forged media). Easy availability of such tools exacerbates the issue of deep fakes.

Cyber stalkers can also misuse the technology if someone denies giving into their desires and wants. Just as an online image is sufficient for a teenager to create a false, derogatory video of his love interest and spread it around, thus adding on to the emotional and mental stress of the individual. Another use of deep fakes could be in illegally unlocking smart devices, with the face detection technology getting imbibed in most of the smart devices and even for smart locks. This can lead to misuse of data or even identity thefts.

The Way Ahead

With the growing dangers of deep fakes, researchers have come up with measures that can help spot these deep fakes. One of the means of doing so, as adopted by Apple Iphones, is live face detection, that is, the phone camera detects an actual face as opposed to photographs or videos. Another means of detecting a fake video is via closely looking at the background activities and liveliness. A real video would generate more metadata due to background activities than one that is morphed. One can also protect the videos from being misused by “watermarking” them. It’s like a digital hologram that can actually aid in distinguishing the real from the fake.

The technology for detecting deep fakes is only a means to predict if the video is authentic or not. However, with the plethora of videos and photographs available online, it becomes a Herculean task for authorities to check each and every video for its authenticity. Therefore, the civil society should be made aware to assess the credibility of the source regarding any video or voice clip. It is important to train citizens and make them more prepared and resilient to disinformation campaigns.

A major obstacle in countering fake news has been the lack of laws on the same. Disinformation is not a crime in a democracy. However, increasing menace of deep fakes may lead governments to take preventive measures like content based regulation. This may of course create another dilemma pertaining to individual’s rights versus security. In the coming days, deep fakes are expected to see an exponential rise. It is important to understand that deep fakes could be weaponized in ways that could weaken the fabric of democratic society itself.

Hence, it is essential that governments start investing in effective preventive measures for countering deep fakes. Building a strong AI and deep learning resource base that can stand at the forefront of fighting fakes as well as deep fakes should be the starting point of such actions. Additionally, individual websites and private players should keep a track on the authentication of the content that is being uploaded or circulated. Similarly, individuals should take cognizance of the threat these deep fakes pose and should verify any content before forwarding it. Unless the government, industry and civil society comes together to tackle this menace, deep fakes would remain a difficult cat to bell.

Views expressed are of the author and do not necessarily reflect the views of the IDSA or of the Government of India.

*About the author: Kritika Roy is Research Analyst at Institute for Defence Studies and Analyses.

Source: This article was published by IDSA