Assessing Risk Of Mutation Due To Residual Radiation From Fukushima Nuclear Disaster

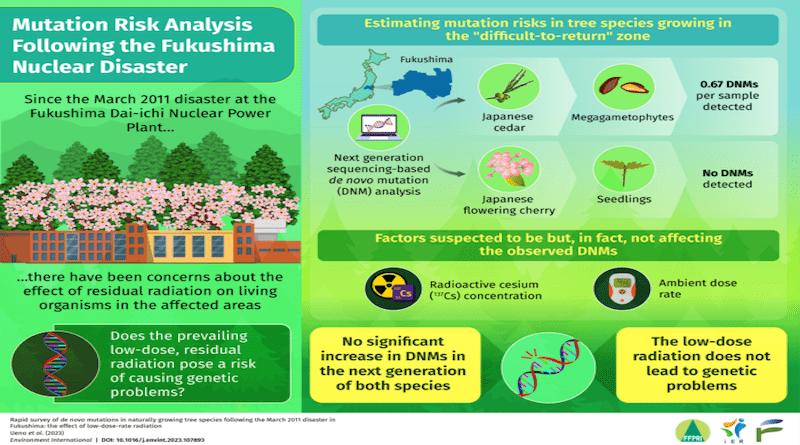

Ionizing radiation from nuclear disasters are known to be harmful to the natural environment. The Fukushima Dai-ichi Nuclear Power Plant meltdown that occurred in 2011 is a prominent example of such a disaster in recent memory. Even a decade after the incident, concerns remain about the long-term effects of the radiation. In particular, it is not clear how the residual low-dose radiation might affect living organisms at the genetic level.

The brunt of the disaster is usually borne by the floras inhabiting the contaminated areas since they cannot move. This, however, makes them ideal for studying the effects of ionizing radiation on living organisms. Coniferous plants such as the Japanese red pine and fir have, for instance, shown abnormal branching after the Fukushima disaster. However, it is unclear whether such abnormalities reflect genetic changes caused by the prevailing low-dose-rate radiation in the area.

To address this concern, a team of researchers from Japan developed a rapid and cost-effective method to estimate the mutation risks caused by low-dose-rate radiation (0.08 to 6.86 μGy h-1) in two widely cultivated tree species of Japan growing in the contaminated area. They used a new bioinformatics pipeline to evaluate de novo mutations (DNMs), or genetic changes/mutations that were not present earlier or inherited, in the germline of the gymnosperm Japanese cedar and the angiosperm flowering cherry.

The study, led by Dr. Saneyoshi Ueno from the Forestry and Forest Products Research Institute, was recently published in the journal Environment International and involved contribution from Dr. Shingo Kaneko from Fukushima University. “People living in the affected areas are worried and need to feel safe in their daily lives,” says Dr. Kaneko when asked about the motivation behind their study. “We wanted to clear the air of misinformation regarding the biological consequences of the nuclear power plant accident.”

For sampling Japanese cedar, the team first measured the radioactive cesium (137Cs) levels of the cone-bearing branches. The cones were then used to collect the seeds, which were germinated, and the remaining megagametophytes were used for DNA extraction. For the Japanese flowering cherry, an artificial crossing experiment was performed, followed by seed collection and DNA extraction. The samples were subjected to restriction site-associated DNA sequencing, which compared the DNA sequences present in the offspring seed to those present in the parent. The DNMs were detected using a bioinformatic pipeline developed by the authors.

Interestingly, the team found no DNMs for the Japanese flowering cherry and an average of 0.67 DNMs per megagametophyte sample for the Japanese cedar in the “difficult-to-return” zone. Moreover, the 137Cs concentration and ambient dose rate did not have any effects on the presence or absence of DNMs in Japanese cedar and flowering cherry. These findings suggested that the mutation rate in trees growing in contaminated areas did not increase significantly owing to the ambient radiation. “Our results also suggest that mutation rates vary across lineages and are largely influenced by the environment,” highlights Dr. Ueno.

The study is the first to use DNM frequency for assessing the after-effects of a nuclear disaster. With the number of nuclear power plants increasing globally, there is a growing risk of nuclear accidents. When asked about their study’s future implications, Dr. Ueno remarks, “The method developed in our study can not only help us better understand the relationship between genetics and radiation but also perform hereditary risk assessments for nuclear accidents quickly.”

For now, this is as reassuring as it can get.