Defending The West From Russian Disinformation: The Role Of Leadership – Analysis

By Published by the Foreign Policy Research Institute

By Eriks Selga and Benjamin Rasmussen*

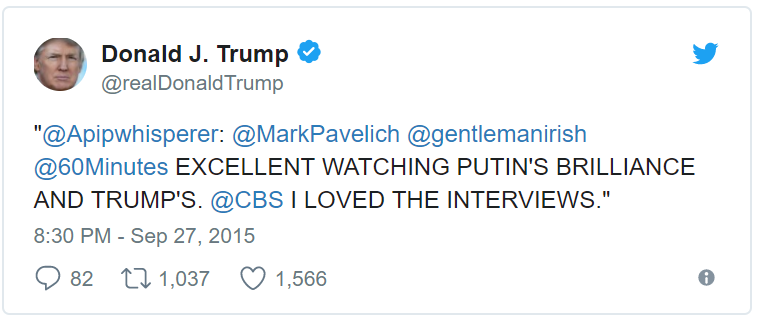

(FPRI) — Russian disinformation has led a blitzkrieg over the West, and the implications are grave. Germany witnessed the scandal of “Lisa,” France saw false claims of Saudi Arabian financing for President Macron’s campaign, Norway was accused of stealing children from their families, and Ukraine was reported as crucifying a three year old child. Not even the United States could escape the Kremlin’s reach; the integrity of the U.S. electoral system was brought into question after Kremlin-financed troll activity and social media advertising helped Donald Trump win the election.

Moscow’s campaigns are far-reaching both horizontally across nations, and vertically within societies. If the United States is to become resilient against these information threats, Washington must craft a strategy that focuses on Russia’s primary target: the mass public. Developing awareness at the grassroots level requires strong guidance from national leaders. Considering the Baltic states have done this for decades with effective results, the United States should lean heavily on takeaways from the Baltic experience when crafting a strategy to defend the U.S. information space.

Understanding the Gamut of Russian Disinformation

The breadth of the modern Russian propaganda machine underscores the urgent need for a counter-disinformation strategy. Moscow has utilized state-controlled internet trolls and bots since 2011, when they were mobilized to gain the support of the Russian middle class in an internet environment not yet under state control. Ever since, Russia has continued to develop a comprehensive methodology that uses a wide network of sources and internet algorithms to push biased content to target audiences and achieve national objectives. A 2016 resolution of the European Parliament accentuated the scope of instruments employed by the Russian Government: “think tanks (e.g. Russkiy Mir), special authorities (Rossotrudnichestvo), multilingual TV stations (such as RT), pseudo news agencies and multimedia services (e.g. Sputnik), cross-border social and religious groups . . . social media and internet trolls.”

Disinformation is often tailored to the local contexts and incidents familiar to the target audience. Campaigns generally bombard target societies with dozens of biased or false stories across the internet until one gets recirculated by more reputable media outlets. While disinformation topics vary widely, they generally center around one of several themes, including radicalization, delegitimization, demoralization, passivation, and deterrence. Concurrently, the stories lend credibility to alternative information outlets by claiming that traditional sources are untrustworthy, thereby creating an “information fog” that sows confusion about the validity of sources. Furthermore, disinformation pieces are often created to corroborate planted stories. Through this method, Russia can impair public decision making capabilities and manipulate opinion.

A report by the Center for European Policy Analysis found Russian strategy to be highly adaptive on a country-by-country basis, with disinformation targets ranging from specific individuals to the political health of the state. The European Union’s East StratCom Task Force highlighted Russia’s use of different tools in different areas. Campaigns directly target Russian-speaking minorities in the Baltic states purposely fill Central Europe with “alternative” websites and flood Scandinavia with comment trolls. Vulnerabilities are carefully sought out to create fitting narratives for the target audience. In the United States, Russian trolls frequently pander to the views of extremists; Twitter bots were found inciting partisan blame for the Charlottesville massacre soon after the event took place, seeking to further national divisions. Disinformation has become endemic across American society; a recent Pew Research Center study found that around a third of Americans encounter fake news online frequently, while almost a quarter have shared such news. When fake news is encountered, Americans are often fooled; an Ipsos study that presented fake news headlines to participants found the vast majority to believe these stories to be either “very” or “somewhat accurate.” Most Americans struggle with what to believe online, making the internet a fertile breeding ground for disinformation.

Baltic Leadership and Disinformation: An Exemplary Model

Disinformation in the Baltic states is disseminated primarily through Russian-controlled media and targets Russian-speaking minorities. The thematic focus of these campaigns is the failure of the state. Recurring themes include economic and social difficulties, state corruption, targeted discrimination against Russian minorities, and NATO/EU support for the broken status quo. Similar campaigns have been prevalent in the region since the collapse of the Soviet Union, but have escalated since the Baltic states began to pursue NATO and EU membership in the late 1990s. Decades of experience against Russian propaganda have allowed the Baltic states to hone their resistance strategies, resulting in a tangible grassroots resiliency.

Baltic populations differ greatly from their U.S. counterparts in terms of susceptibility to disinformation. For example, one study which presented Latvians with fake headlines found that, on average, only a quarter believed the disinformation was truth. Furthermore, only 5 percent of Latvians have not encountered disinformation in mass media, and over half of respondents report the impact of fake news as an important policy issue. These studies suggest that Baltic populations are generally more aware of the disinformation threat and are less easy to fool.

The Baltic information bulwark is built on a distinctively considerable civil and public sector participation in counter-disinformation efforts. In Lithuania, volunteers developed a network of “elves” who counter trolls by following their activity and exposing it as fraudulent in the same forums that disseminate propaganda. Estonia has created its own national broadcasting channel for Russian speakers, and Latvia is implementing media literacy as part of school curricula. The Ministry of Defense in Latvia publicly declared a series of posts stating that NATO was going to mount psychological offenses against Russia as complete falsehoods. All Baltic states have taken various measures against Russian TV channels, intermittently fining and suspending them when their transmissions have constituted illegal agitprop. Finland has already learned from these examples and successfully implemented similar maneuvers to increase its own resiliency against disinformation.

The idea of Russian disinformation is common knowledge across the three neighboring states, and efforts to increase this public awareness originate from the top. The presidents of the Baltic states—Latvia’s Raimonds Vejonis, Lithuania’s Dalia Grybauskaite, and Estonia’s Kersti Kaljulaid—have all taken strong public stances against malicious disinformation campaigns by affirming their existence and leading various response efforts. The combined initiatives of Baltic leaders, the public sector, and the private sector, which consistently echo charges of the Russian disinformation threat, create widespread awareness of the problem. As a result, Baltic populations are more critical when analyzing the media and less susceptible to modern information warfare tactics. Most importantly, the nudging within different levels of society has raised awareness without panic against Russia or other possible perpetrators. Information warfare is considered similar to hacking—a matter of daily life that must be dealt with.

The clarity of positioning by Baltic leaders is a stark contrast to U.S. practice. President Obama, for example, responded to Russia’s information threat not through a straightforward approach towards the public, but rather through opaque discussions with Facebook and other industry giants on their role in the information war. The indecisiveness towards broader public acknowledgment allowed disinformation employed by Russia in favor of its Ukrainian efforts to go largely without criticism from the American public. Even more worrisome are efforts by President Trump and Secretary of State Rex Tillerson to downplay Russian involvement in American politics altogether. The lack of a clear stance by U.S leadership towards information feeds the dissonance of narratives, which resonates with the American people. While they are aware of the presence of disinformation, they may fail to understand the full extent of its underlying geopolitical purpose and let down their guard.

Leading the Charge to Grassroots Recognition

If Washington seeks to mitigate disinformation risks, U.S. policymakers must set a new long-term strategic objective for the information space. While studying the domestic disinformation threat and countering it abroad can inform future changes in U.S. tactics for countering the foreign disinformation threat, these initiatives will not be sufficient in creating domestic resilience on their own. Resiliency to disinformation begins at the grassroots level, as the Baltic states have demonstrated. However, grassroots recognition must begin with high-level recognition of the disinformation threat from the most trusted members of the U.S. government.

Therefore, U.S. officials must implement a public awareness campaign that can transform the American public, currently vulnerable to fake news and Russian influence, into a resilient populace aware of the growing disinformation threat. Trust in U.S. defense agencies is high across domestic audiences, so warnings of foreign disinformation should come directly from head defense officials, preferably Secretary of Defense James Mattis or President Trump. These messages could be echoed by Congressmen across the aisle to amplify public awareness.

The positive effects of implementing these tactics are two-fold: first, they would increase widespread public recognition of the problem and organically increase the filtering capabilities of Americans. Second, they would have positive spillover effects on the West’s overall ability to defend against disinformation assaults—steering allied states towards a stronger, more cohesive partnership against a hybrid threat without precedent. High-level recognition alone may not fully solve the disinformation problem, but it would be a powerful step for the United States and its Western partners.

*About the authors:

Eriks K. Selga is an Associate Scholar at FPRI’s Eurasia Program and a lawyer at PricewaterhouseCoopers Legal Latvia.

Benjamin Rasmussen is a Military and Political Intelligence Specialist at the Global Intelligence Trust LLC and a researcher for the Security and Foreign Policy Center of the Roosevelt Institute at Yale.

Source:

This article was published by FPRI

Great propaganda piece.