Probing The Inner Workings Of High-Fidelity Quantum Processors

The Science

Tiny quantum computing processors built from silicon have finally surpassed 99 percent fidelity in certain logic operations (“gates”). Quantum computers store information in the quantum state of a physical system (in this case, two silicon qubits) then manipulate the quantum state to perform a calculation in a manner that isn’t possible on a classical computer. Fidelity is a measure of how close the final quantum state of the real-life qubits is to the ideal case. If the fidelity of logic gates is too low, calculations will fail because errors will accumulate faster than they can be corrected. The threshold for fault-tolerant quantum computing is over 99 percent.

Three research groups demonstrated more than 99 percent fidelity for “if-then” logic gates between two silicon qubits. This required precisely measuring failure rates, identifying the nature and cause of the errors, and fine-tuning the devices. The researchers used a technique called gate set tomography to achieve this in two of the three experiments. The technique combined the results of many separate experiments to create a detailed snapshot of the errors in each logic gate. The researchers were able to make a precise determination of the error generated by different sources and fine-tune the gates to achieve error rates below 1 percent.

The Impact

Quantum computing may be able to solve certain problems, such as predicting the behavior of new molecules, far faster than today’s computers. To do so, researchers must build qubits, engineer precise couplings between them, and scale up systems to thousands or millions of qubits.

Researchers expect qubits made of silicon to scale up better than the qubits used in today’s testbed quantum computers, which rely on either trapped ions or superconducting circuits. Achieving high-fidelity logic gates opens the door to silicon-based testbed quantum computers. It also demonstrates the power of detailed error characterization to help users pinpoint error modes then work around or eliminate them.

Summary

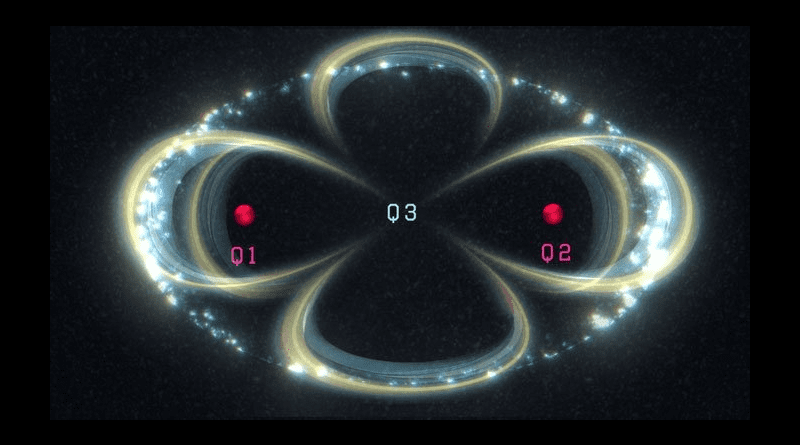

Qubits – protected, controllable 2-state quantum systems – lie at the heart of quantum computing. Quantum computing processors are built by assembling an array of at least two (and hopefully someday thousands or millions) of qubits, with an integrated control system that can perform logic gates on each qubit and between pairs of qubits. Their performance and capability are limited by errors in the logic gates. High-fidelity gates have low error rates. Once the error rate is less than a certain threshold – which scientists believe to be about 1 percent – quantum error correction can, in principle, reduce it even further. Beating this threshold in laboratory experiments is a major milestone for any qubit technology.

What kinds of errors are occurring is also a big deal for quantum error correction. Some errors are easier to eliminate or correct; others may be fatal. Quantum computing researchers from the Department of Energy (DOE)-funded Quantum Performance Laboratory worked with Australian experimental physicists to design a new kind of gate set tomography customized to a 3-qubit silicon qubit processor. They used it to measure the rates of 240 distinct types of possible errors on each of six logic gates. Of those possible errors, 95 percent did not occur in the experiments, and the remaining errors added up to less than 1 percent infidelity. Research groups in Japan and the Netherlands reported similar results simultaneously, with the Dutch group also using the DOE-funded pyGSTi gate set tomography software to confirm their demonstration.