Data For Sale – Analysis

Consumers did not realize the value of their personal data, and policies are not keeping pace with the growth of data collection and sales.

By Susan Froetschel*

The scandal involving Facebook and datamining company Cambridge Analytica dramatically confirms the old adage of “no free lunch.” Facebook’s more than 2 billion users are waking up to the fact that the “free” online site, part of their daily routine, extracted a stiff price: valuable personal data. The only way to protect one’s data is vigilance by all, though that may well be the equivalent of closing the barn door after the proverbial horse escaped.

News reports that Cambridge Analytica swept up details on millions of Facebook users – then used details for targeted political advertising in many countries – jolted industry, regulators and users. Yet users consented to data exchanges, often impatiently, without reading pages of small print of terms-of-service agreements. Many companies profit handsomely from knowing the range and length of users’ phone calls, driving patterns, family history and genetics, purchase details through credit cards and store discount cards, insurance claims for health problems, and games that measure frustration levels or ability to follow rules.

Of course, gigantic Facebook is not alone. Big-data analysis is big business. Companies continue to discover new value in cross-industry exchanges, combining forces to monetize datasets to improve services, reduce fraud, attract new customers or meet regulatory requirements. Cambridge Analytica is not alone either. In China, the Shanghai Data Exchange, started in 2017, offers a platform for trading all types of consumer information gathered from telecommunications, credit cards and more with the aim of drawing technology firms to the city. The exchange anticipates accounting for one third of China’s data trading volume by 2020. Global revenues for data analytics are expected to exceed $200 billion in 2020, according to International Data Corp., which points out the rush to share data could be prone to conflicts of interest.

Collecting data to assess target groups is not new. Decades ago, telemarketing firms relied on typists to go through phone books, cross-listing names and numbers with other public lists. Librarians understood the potential privacy pitfalls early on and endorsed policies to protect confidentiality – before computers became widespread, libraries stopped using cards listing individuals who had previously borrowed books. Likewise, the college application process has long been a data-mining exercise to determine which applicants are likely to enroll and graduate.

Patients, borrowers, students who fill out offline application forms are not exempt from becoming targets. Paper forms are quickly scanned into computer files. Large community events and fairs offer opportunities for data gathering. Hundreds of vendors attending large home shows hold contests to gather potential customer contacts, and job fairs collect resumes to study the evolving job market and reap new employer contacts.

Computers made data collection easy. Any type of data can be packaged and marketed. Cities already provide data on properties, taxes and public health as a public service, and the World Economic Forum suggests communities could do more to distribute data on assets from traffic to waste collection. Associations offer services and information on how to research and package data. To improve efficiency, utilities in India, Europe and the United States rely on smart meters to monitor and predict patterns of energy and water use. Committees and policies for monitoring data use and information governance so far are not keeping up with the growing numbers of organizations gathering and trading data.

Data products can be specific, offering details about individuals, or aggregated to relay broad trends. Laws in the United States and Europe protect individual health, education or financial information, but do not ban aggregation as described in privacy policies, terms of agreement and license agreements. For example, the Common Application – an online form required for applying to many US colleges – details its policy: Third parties and contracted researchers may have access to application and related information which can then be packaged as “non-personal identifying demographic, historical, generic, analytical, statistical or aggregate data obtained from other sources, and/or data.”

Health is an especially sensitive area, and privacy laws, even the strict new data protections to be imposed by the European Union in May, include exceptions. The EU law requires that patient data “be collected for a specific explicit and legitimate purpose” but allows that same data to “be re-used for research” for the public-interest purpose of driving innovative treatments. The same law limits how long patient data can be stored, “except for archiving and scientific research purposes.” Explicit patient consent is not required as long as safeguards masking identity are in place. Of course, as the Cambridge Analytica case shows, data collected ostensibly for “academic” research can be deployed for other operations.

Financial firms collaborate on data collection to avoid risks. Insurers form special units for collecting drivers’ data. Digital strategies fuel growth, explains Boston Consulting Group. Companies combine online business processes with communications and services to gather data. App developers respond with entertaining quizzes, surveys and games designed to entice consumers to hand over more data. The harvest of Facebook profiles began with a small personality test, fewer than 300,000 users took part for a tiny sum, and in the process millions of friends got dragged into the net.

Less than 20 percent of third-party app developers for Facebook’s platform are based in the United States. Developers like Elitech in India provide custom-designed applications or games that assess target audiences and prioritize user engagement. Developers can use games to assess user performance and personality with small tasks from placing an order to solving problems. Facebook encourages developers around the world with Developer Circles, anticipating local app development to lead to more local users.

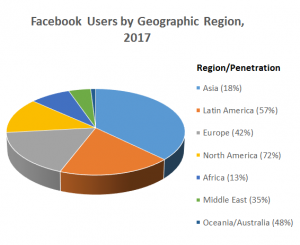

Asia leads the world with more than 30 percent of 20 million app developers while Europe and North America each have about 30 percent. Economic Times reports that India leads the world in Facebook users and has the second largest base of Facebook developers. Developers and social media firms worry about new regulations disrupting the growing industry. Apps and games available from Apple’s iTunes Store went from a few hundred in 2009 to more than 3 million in 2017, with downloads in the billions.

Apps take advantage of the universal desires to play, understand ourselves, or compare how we perform with others. Experts analyze user choices, associating interests as detected by searches and clicks with individual behavior, hunting for patterns and correlations. Some companies offer discounts to customers deemed as reliable or credit-worthy; other firms hunt for gullible, impulsive spenders.

Unorganized data may seem worthless, and Facebook and countless others readily opened the gates to app developers and advertisers with little attention to the ultimate goals behind data transfers. Soon after news emerged about Cambridge Analytica’s use of Facebook profiles, Mark Zuckerberg issued an apology, admitting that even social media executives had not realized the full potential of their platforms and how many insights might be gleaned. He admitted not imagining in launching Facebook in 2004 that the site could be accused of changing the course of an election. To Recode, he admitted to feeling “uncomfortable sitting here in California at an office, making content policy decisions for people around the world.” His strategy is for communities to decide their values and rules for Facebook.

Users have a choice on what to share and with whom. Like it or not, big-data analysis influences communities and workplaces, and users have a responsibility to read lengthy policies with care. A lesson emerging from the Cambridge Analytica and Facebook debacle is that those who refuse to surrender data cannot evade the consequences especially when so many other users do share. Millions of friends whose data was harvested may not have given specific consent, but in the end that did not matter.

*Susan Froetschel is editor of YaleGlobal Online and the author of five novels including Fear of Beauty and Allure of Deceit, both set in Afghanistan.